What is PTE Academic?

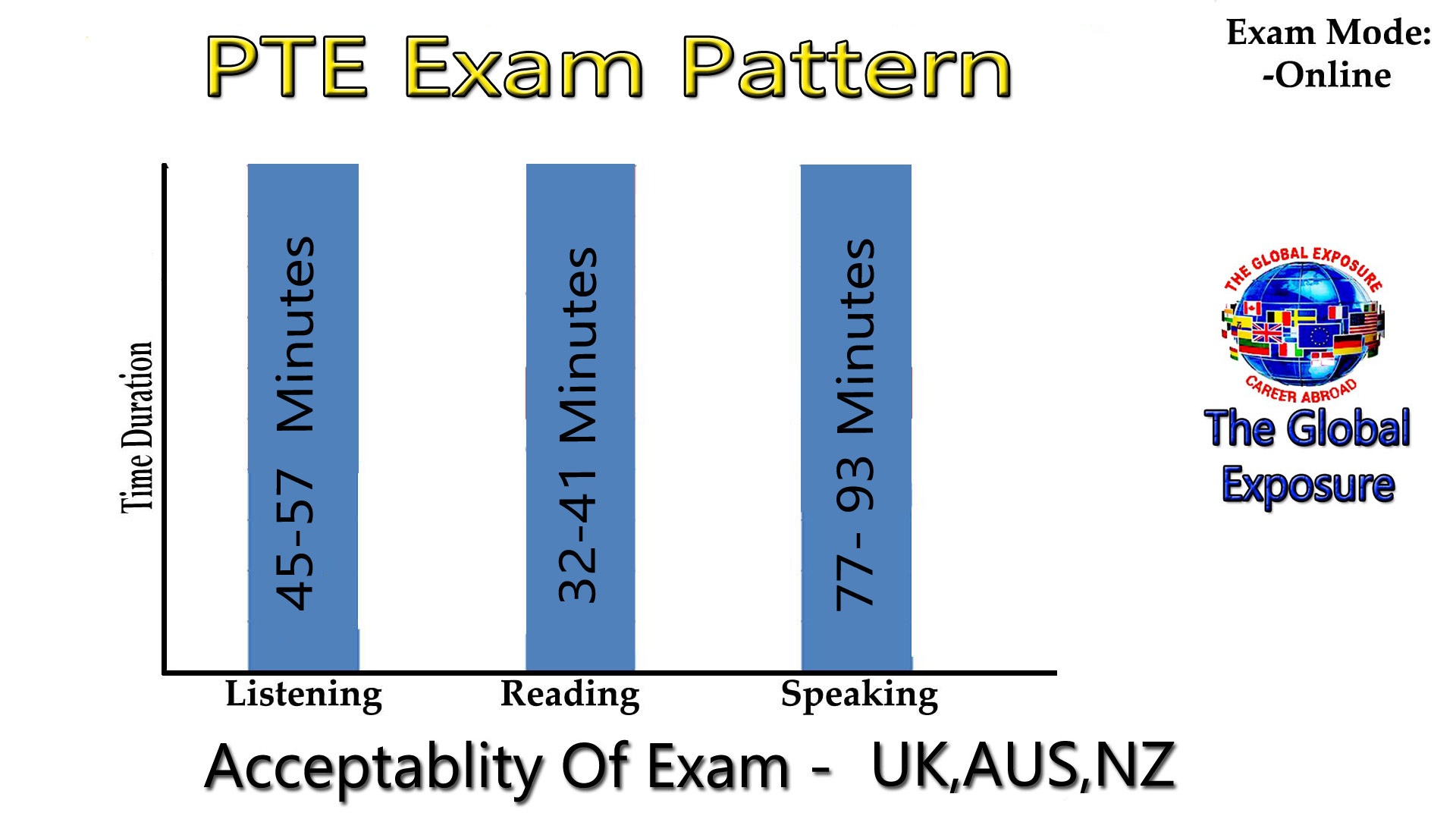

PTE Academic is a 3 hour long,computer-based assessment of a person’s English language ability in an academic context.The test assesses an individual’s communicative skills of Reading ,Writing, Listening and Speaking skills Speaking skills through questionsusing authentically-sourced material .In addition, the test provides feedback on enabling skills in the form of Oral Fluency,Grammar , Vocabulary, Written Discourse , Pronunciation and Spelling.

When Was PTE Academic Launched?

PTE Academic was launched in 2009 after a comprehensive research program led by some of the world’s leading experts in language assessment.

Where is PTE Academic recognised?

Since 2009 PTE Academic has been rapidly accepted by universities, governments, professional bodies and employers around the world as a valid and reliable assessment of academic English proficiency. We, at The Global Exposure, can help you.

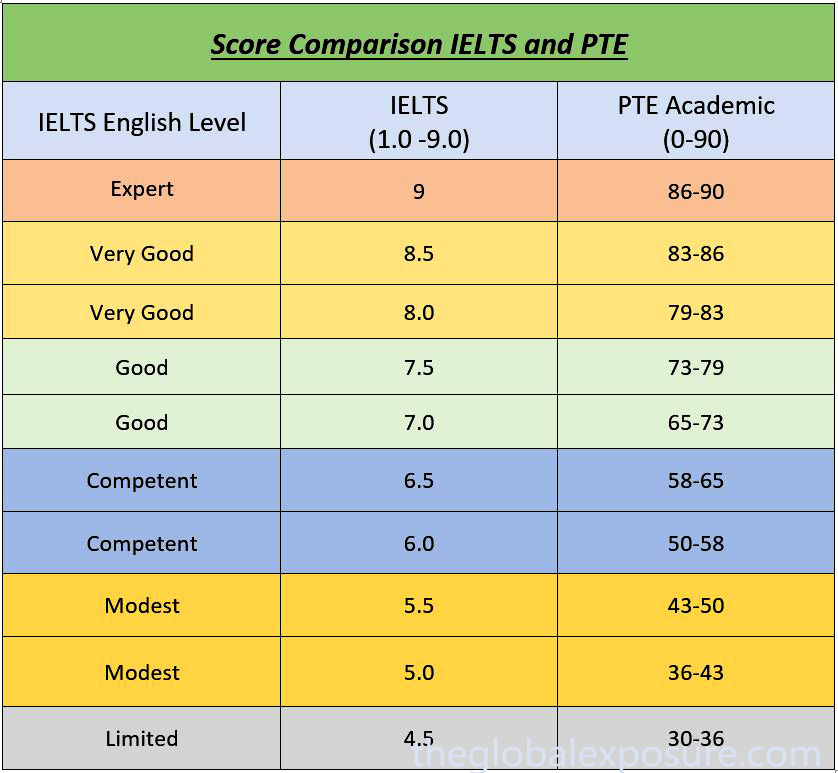

How is PTE Academic scored?

PTE Academic is assessed using automated scoring technology and test takers are provided with a score from 10-90 overall and across all skills.Pearson’s 10-90 scale is based on the Global Scale of English.

How accurate is PTE Academic?

The scoring systems use complex algorithms which are initially trained using human rated samples.

Thousands of marks and examples of performance are used to train these automated scoring systems until sufficiently high enough levels of reliability are achieved. The field testing of PTE involves assessing responses from over 10,000 candidates from more than 120 different language groups.

For the speaking component nearly 400,000 responses were collected and marked by human raters.The correlation between the human scores and the machine scores for an overall measure of speaking was 0.96 thus it proving the reliability of the measure of speaking in PTE Academic. The measurement applied to assess how accurate a language test is assessing an individual’s ability is known as the ‘Standard Error of Measurement’ or SEM.

Comparing data published by some of the other major English tests recognized by Government bodies and Higher Education Institutions, PTE Academic has the highest reliability estimates for both the overall score and the communicative skills scores based on the SEM of all the major academic English tests.

For more information on Standard Error of Measurement (SEM) and scoring concordance of PTE Academic with other major English Languag Assessments, please refer to p42-50 of the PTE Academic Score Guide.